| © 2022 Black Swan Telecom Journal | • | protecting and growing a robust communications business | • a service of | |

| Email a colleague |

September 2017

Metadata Toolkit: Mediating the IP Network in Support of Fresh Security Apps

Do you recall how crucial network billing mediation was to the growth of the telecom software business?

When mediation knowledge became affordable, it became easier to replace or mix-and-match billing software. Mediation also catalyzed fraud management as a software category: it was crucial to the rise of telecom analytics too.

Here’s my point: mediation took billing data extraction and analysis beyond the realm of network experts, and thereby brought to life scores of new software applications and suppliers.

Turning now to the subject of IP network security, we must admit that it’s still locked in a “pre-mediation” world because solutions for IP security are still not affordable for small- to mid-sized telecoms and OTTs. Capturing and storing security-related network data still requires the deep network expertise of gatekeepers — vendors of proprietary devices, service assurance systems, and network security integrators who command high prices.

But now, some innovators have arrived to help operators cost-effectively abstract the complexity of network data capture to ease the pain of software development in security and network optimization apps.

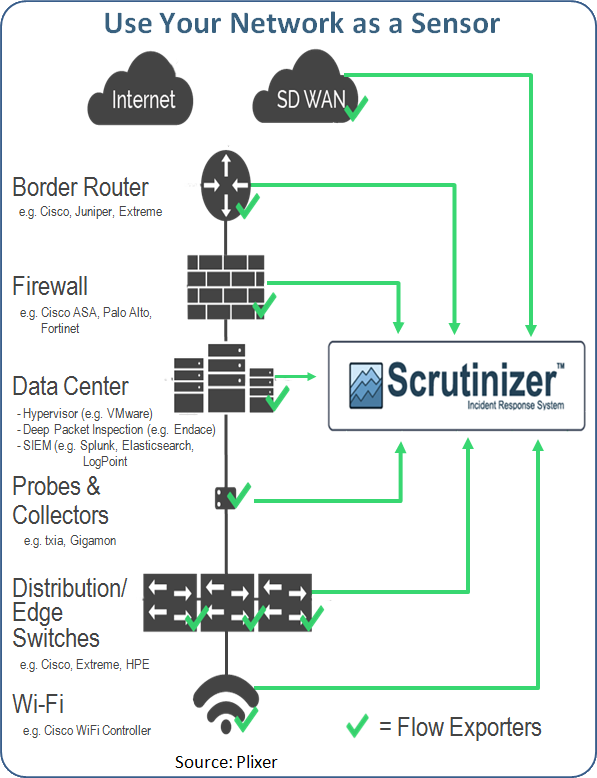

Plixer is one such company. Its mission is to capture and organize metadata from the infrastructure, the switches, the routers and firewalls. So when a security event occurs, you can quickly answer the key questions — who, what, where, when, why , and how? — because the information sits in one readily-accessed database.

We’re pleased to interview Plixer’s Director of Strategic Relationships and Marketing, Bob Noel, who briefs us on his company’s way of democratizing network data.

| Dan Baker, Editor, Black Swan: Bob, can you tell us how you’re able to capture hundreds of network parameters and make them accessible. |

Bob Noel: Well, Dan, the secret sauce is metadata. We are collecting the data from the infrastructure itself by tapping into the equipment you have via a few key protocols that are well-understood.

One of those protocols is NETFLOW, invented by Cisco many years ago, and because there were a number of different versions of NETFLOW, the industry decided to standardize on a version called IPFIX.

So anybody that exports data and metadata about the source and destination of traffic, the users, the protocols and applications and all of those things can export via IPFIX to a company like us.

Our product is called Scrutinizer and its mission is to gather data from all points of telemetry — your switches, routers, firewalls, and probes. All these very different devices transmit summarized data back to us. And we put all that in a single database that gives our customers the ability to very quickly report on that traffic.

We also have the ability to run behaviour analysis against that database to determine the who, what, when, and where. You can also filter that traffic data to do rapid root cause analysis.

So there are a lot of technologies in the industry that are telling companies: look you have to take a piece of software called an agent and deploy it on your devices whether they are IoT devices or phones or whatever and that gives that company some visibility.

We go to the industry and basically say: “You know, there are already way too many agents on all of these devices and it’s too much to manage.”

And if you look at the traffic as it flows across the network and you can associate that traffic or conversation to the device, then we can do behavior analysis on that without needing a software agent on the device.

We can look for devices that behave in an abnormal way. Maybe they are talking to servers that they have never talked to before or protocols they have never used before. Things like that.

So we can become a detection path for those customers so when there’s something going on in your network, say a security event, we can send alerts and our database can report all the way through the forensics chain of delivering an incident response.

| How do you position yourself in the security space? There are some huge companies offering integrated security systems such as SIEMs (security information event management) tools. |

Well for decades, the industry has been moving towards the idea of "better context" on their data. Companies have been spending money on technology products in the name of prevention. They bought firewalls and intrusion detection systems. They bought intrusion prevention and SIEMs. The trouble is that every one of these products creates a silo of security-related data.

As far as SIEMs go, time after time, when security professionals are surveyed, the majority say their SIEM solution gives them good information, but that it lacks actionable information. It lacks context.

What a SIEM typically does is aggregate logs. For example, the firewall identifies an event occurred. Something happened, but the SIEM is unable to associate the user name for that, the protocol, the application, the bandwidth utilization of that application.

So it is not contextual, and that’s where we enter the picture with our contextual database. We gather that metadata from many different locations, putting it into our database. Our customers use us as their primary dashboard and then we have integration, actual technology integration with a repository like a Splunk, Elastic Search or deep packet inspection from Endace. So once you’ve identified the root cause, a timestamp, and the who, what, where, when, and why, you can then immediately retrieve the incident-related information or log information out of storage to help you further (from a forensic standpoint) arrive at the proper incident response.

Another advantage: because we gather summary information, the metadata, it’s a much more lightweight version of the data, so it’s far more cost effective and efficient to store that kind of data there.

Frankly, some of these SIEMs can be pretty darn big and expensive when you look at the backend database that they are stored on.

| I understand your solution can be used in applications that monitor DDoS attacks. To what extent will your metadata database enable hard real-time applications? |

One of the big areas that the telcos are struggling with is the whole DDoS thing. This is a difficult challenge.

Having a system that can, in near real time, monitor the traffic that is traversing the telecom network and identify traffic associated with DDoS attacks is very valuable to them.

Our CEO, Mike Patterson, is very passionate about where the market is and where the opportunities are, and has written extensively on BCP 38.

The Internet Engineering Taskforce put out BCP 38, Best Current Practice 38. Essentially, it says that service providers can monitor network traffic entering their network to look for instances where the source IP address is different from the IP range of that customer’s network. When traffic enters a service provider’s network that does not match the IP range of the originating network, that traffic should be filtered. In doing so, service providers would be able to significantly reduce the amount of DDoS traffic and significantly reduce the impact of global DDoS issues.

| I recently interviewed Evolve IP who represents a new category of telecom. They call themselves a “cloud play provider” and they offer all their services through IP and the cloud — voice, data, desktop, and lots more. It seems like the Plixer solution is well-suited for these kinds of companies. |

Yes, Dan. IPFIX metadata is an incredible untapped source of information that people have not capitalized upon and yet it’s already available in their networks.

It is just a matter of turning it on and pointing it at a tool like Scrutinizer to give you all this rich forensic data that you’re missing and you need.

When a security event occurs, if you try to use your IDS as your point of forensics, there’s no context there and there are literally hundreds of thousands or millions of log events. Similar with the SIEM, so that integration point we offer is a huge market opportunity for providers looking for more affordable access to rich network information.

| How much does the software cost? How does an operator get started with the tool? |

We offer a free version of the software, providing full functionality for 30 days, then after that database information is limited to 5 hours in production. That’s not necessarily an enterprise production environment, but companies can start for free.

We price by the number of devices that export information to us. And so if we’re collecting the NETFLOW and IPFIX protocol and you have one router that is sending that information to us, an organization can get started for only a few thousand dollars. Our customer base ranges from very small to medium-sized enterprises all the way to Fortune 50.

Copyright 2017 Black Swan Telecom Journal

Black Swan Solution Guides & Papers

- Expanding the Scope of Revenue Assurance Beyond Switch-to-Bill’s Vision — Araxxe — How Araxxe’s end-to-end revenue assurance complements switch-to-bill RA through telescope RA (external and partner data) and microscope RA (high-definition analysis of complex services like bundling and digital services).

- Lanck Telecom FMS: Voice Fraud Management as a Network Service on Demand — Lanck Telecom — A Guide to a new and unique on-demand network service enabling fraud-risky international voice traffic to be monitored (and either alerted or blocked) as that traffic is routed through a wholesaler on its way to its final destinations.

- SHAKEN / STIR Calling Number Verification & Fraud Alerting — iconectiv — SHAKEN/STIR is the telecom industry’s first step toward reviving trust in business telephony — and has recently launched in the U.S. market. This Solution Guide features commentary from technology leaders at iconetiv, a firm heavily involved in the development of SHAKEN.

- Getting Accurate, Up-to-the-Minute Phone Number Porting History & Carrier-of-Record Data to Verify Identity & Mitigate Account Takeovers — iconectiv — Learn about a recently approved risk intelligence service to receive authoritative and real-time notices of numbers being ported and changes to the carrier-of-record for specific telephone numbers.

- The Value of an Authoritative Database of Global Telephone Numbers — iconectiv — Learn about an authoritative database of allocated numbers and special number ranges in every country of the world. The expert explains how this database adds value to any FMS or fraud analyst team.

- The IPRN Database and its Use in IRSF & Wangiri Fraud Control — Yates Fraud Consulting — The IPRN Database is a powerful new tool for helping control IRSF and Wangiri frauds. The pioneer of the category explains the value and use of the IPRN Database in this 14-page Black Swan Solution Guide.

- A Real-Time Cloud Service to Protect the Enterprise PBX from IRSF Fraud — Oculeus — Learn how a new cloud-based solution developed by Oculeus, any enterprise can protect its PBX from IRSF fraud for as little as $5 a month.

- How Regulators can Lead the Fight Against International Bypass Fraud — LATRO Services — As a regulator in a country infected by SIM box fraud, what can you do to improve the situation? A white paper explains the steps you can and should you take — at the national government level — to better protect your country’s tax revenue, quality of communications, and national infrastructure.

- Telecom Identity Fraud 2020: A 36-Expert Analysis Report from TRI — TRI — TRI releases a new research report on telecom identity fraud and security. Black Swan readers can download a free Executive Summary of the Report.

- The 2021 State of Communications-Related Fraud, Identity Theft & Consumer Protection in the USA — iconectiv — This 49-page free Report on communications-related fraud analyzes the FTC’s annual Sentinel consumer fraud statistics and provides a sweeping view of trends and problem areas. It also gives a cross-industry view of the practices and systems that enable fraud control, identity verification, and security in our “zero trust” digital world.

Recent Stories

- Epsilon’s Infiny NaaS Platform Brings Global Connection, Agility & Fast Provision for IoT, Clouds & Enterprises in Southeast Asia, China & Beyond — interview with Warren Aw , Epsilon

- PCCW Global: On Leveraging Global IoT Connectivity to Create Mission Critical Use Cases for Enterprises — interview with Craig Price , PCCW Global

- Subex Explains its IoT Security Research Methods: From Malware & Coding Analysis to Distribution & Bad Actor Tracking — interview with Kiran Zachariah , Subex

- Mobile Security Leverage: MNOs to Tool up with Distributed Security Services for Globally-Connected, Mission Critical IoT — interview with Jimmy Jones , Positive Technologies

- TEOCO Brings Bottom Line Savings & Efficiency to Inter-Carrier Billing and Accounting with Machine Learning & Contract Scanning — interview with Jacob Howell , TEOCO

- PRISM Report on IPRN Trends 2020: An Analysis of the Destinations Fraudsters Use in IRSF & Wangiri Attacks — interview with Colin Yates , Yates Consulting

- Telecom Identity Fraud 2020: A 36-Expert Analyst Report on Subscription Fraud, Identity, KYC and Security — by Dan Baker , TRI

- Tackling Telecoms Subscription Fraud in a Digital World — interview with Mel Prescott & Andy Procter , FICO

- How an Energized Antifraud System with SLAs & Revenue Share is Powering Business Growth at Wholesaler iBASIS — interview with Malick Aissi , iBASIS

- Mobileum Tackles Subscription Fraud and ID Spoofing with Machine Learning that is Explainable — interview with Carlos Martins , Mobileum